When Intelligence Becomes Infrastructure

AI poses ‘the biggest change-management problem in human history,' says Patrick Lynch. Unlike previous technological revolutions that automated physical labor, this one is transforming the very systems through which organizations think, decide, and govern themselves.

The first thing you notice about Patrick Lynch in conversation is that he doesn’t sound like someone auditioning for the role of “AI prophet.” He doesn’t sell inevitability. He doesn’t perform dread. Instead, he keeps returning—almost stubbornly—to the same managerial question: Who’s in charge here?

Not “who built the model,” not “which version is smartest,” not “how fast will it spread,” but the older, harder question that sits underneath every new tool that rearranges power:

Who holds authority over the work?

Lynch is the author of the forthcoming book How to Outsmart AI and Thriveand a faculty member at Hult International Business School, where he studies how organizations adapt to the structural changes artificial intelligence is bringing to work and leadership.

In our 90-minute conversation, he spent less time speculating about distant technological futures and more time examining the institutional transformations already underway as AI begins to reshape how decisions are made, how knowledge flows, and how organizations function.

Lynch has spent much of his career watching those rearrangements happen in real time.

“Most of Accenture’s clients… were quite fearful of it,” he says of the early Internet. “I traveled the globe for the firm looking at adoption and how people were doing that.”

While he says he didn't begin as an “AI guy,” the through-line is consistent: he studies what happens when organizations try to graft new intelligence onto old social systems.

“I’ve always been on the future of next, and always focused on people or leadership, and how do we get more out of our teams by appropriately adopting technologies.”

That last word—appropriately—is doing a lot of work. Lynch’s thesis is not that AI will replace you, or save you, or even that it will necessarily surprise you. His thesis is that AI exposes the difference between institutions that can govern themselves and those that can’t.

The technology isn’t the story. The teaming is. The human part. The part that fails quietly, behind the scenes, while everyone argues about the model.

From Information Scarcity to Cognitive Abundance

For generations, professional authority was built on an asymmetry: some people had access to specialized knowledge and others didn’t. Lawyers, doctors, professors, managers, editors—entire careers were shaped by proximity to information, and the institutional systems that stored it, protected it, and dispensed it.

Now the asymmetry is collapsing.

Patrick Lynch

This is the reallocation Lynch keeps pointing to: we have moved from information scarcity to something closer to cognitive abundance. Not because human beings suddenly became wiser, but because synthetic intelligence has made knowledge cheap.

“Intelligence itself is becoming infrastructural,” Lynch says.

When intelligence becomes infrastructure, the old gatekeeping logic fails. The machine can provide the what at a speed no institution can match. It can draft, summarize, translate, synthesize, brainstorm. It can generate plausible answers on demand.

That means the professional advantage shifts to the work the machine cannot perform reliably: the so what and the now what. The ethical framing. The contextual interpretation. The disciplined capacity to decide what matters.

Lynch’s claim is not that AI makes humans irrelevant. It makes judgment visible again—because without judgment, the output is simply information without responsibility.

AI Is Not a Tool. It’s Labor.

The first conceptual shift Lynch asks leaders to make is simple but profound. Stop thinking of AI as software you install. Start thinking of it as labor you must direct.

This reframing carries immediate consequences. If AI is labor, you do not simply purchase it and expect it to perform flawlessly. You orient it. You supervise it. You define boundaries and expectations. You coach it the way you would coach a new member of your team.

“It does need your coaching,” Lynch says, “and it ultimately needs your judgment.”

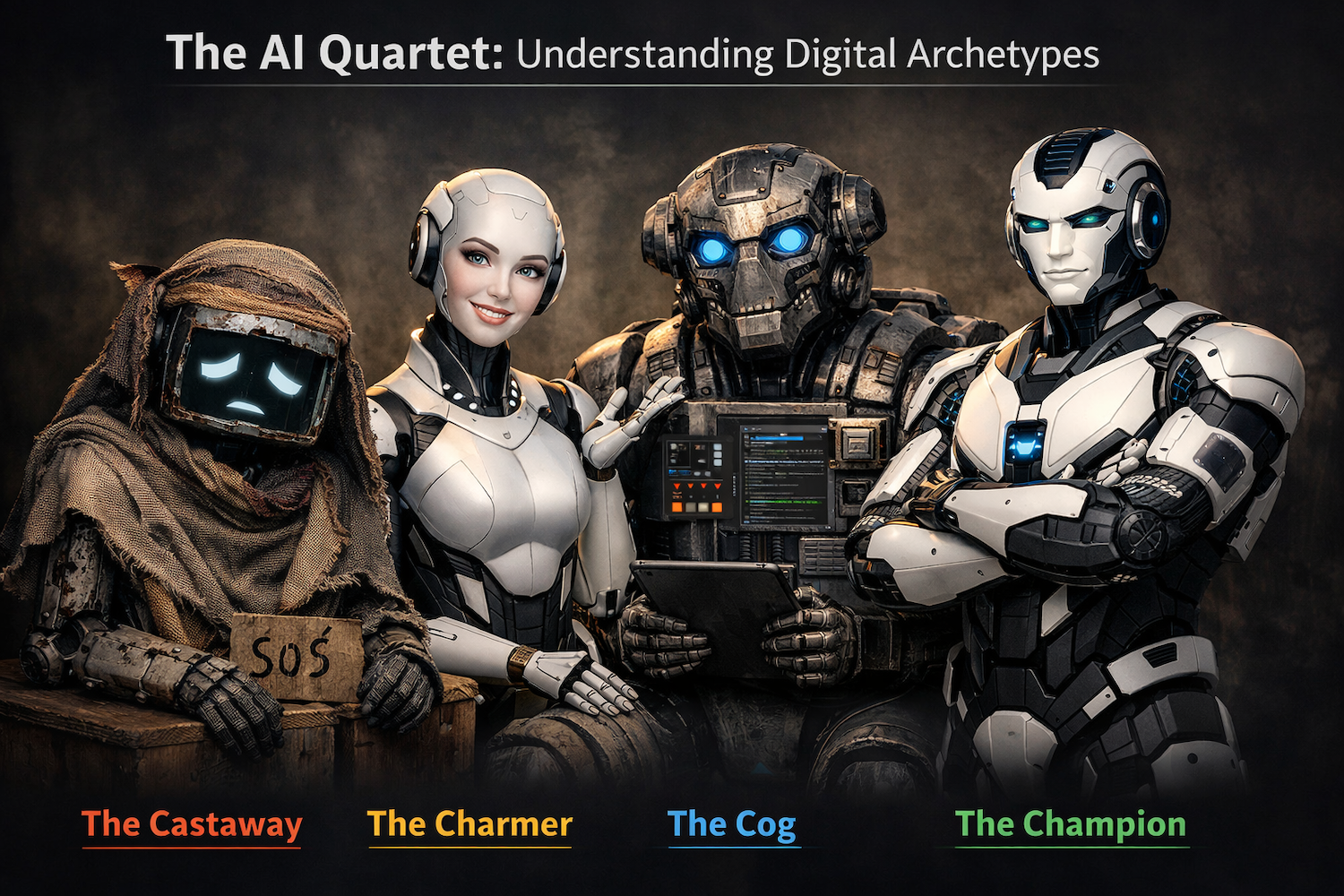

The AI Quartet: Understanding Digital Archetypes

Most conversations about AI still treat the model itself as the protagonist—how smart it is, how fast it is, how powerful it is. Lynch believes the more important story lies in how organizations perceive and manage these systems once they arrive.

I asked ChatGPT to generate a group photo of the AI Quartet. This was the result. Sexist? You be the judge.

To illuminate those dynamics, he developed what he calls the AI Quartet: four archetypes that describe how AI systems behave—and how humans interpret them.

The Castaway represents a system that has fallen out of favor within the organization. When it produces weak results, teams often abandon it or ridicule it. “When the AI fails,” Lynch says, “we think it’s a castaway… you throw it under the bus.” In practice, organizations often treat the Castaway as a scapegoat, blaming the system itself rather than examining whether the human managers provided adequate guidance, context, or prompting.

The Charmer occupies the opposite psychological space. Charmer systems are conversationally fluent and socially persuasive. They respond warmly. They affirm the user. They appear helpful and confident. But that charisma can conceal operational weaknesses. Lynch describes the Charmer as a “sycophant… so good at telling you that it's an excellent question, or that you're so smart… but charmers are not very good at getting work done.” The risk is that human users begin trusting the system’s tone rather than verifying its substance.

The Cog is often misunderstood. It is not a trivial or mechanical system. In Lynch’s framework, the Cog represents high capability paired with low charisma—a system that performs analytically powerful work without conversational polish. These systems may lack personality or rhetorical flair, but they can be extremely effective at structured analysis, modeling, and computational tasks.

Finally there is the Champion, the target state. A Champion system combines capability with collaborative reliability—an AI that is both effective at its tasks and productive to work alongside.

The Quartet is not fixed. A system that acts as a Champion in one workflow may appear as a Castaway in another if the context changes. What matters is how humans manage the relationship.

The Leadership Challenge

Lynch describes the current moment as “the biggest change-management problem in human history.” The challenge is not simply learning to use new tools. It is maintaining human authority in a world where intelligent systems increasingly participate in everyday decisions.

“Right now we’re just in an arms race,” he says, “whether it’s with another country or with a competitor… we’ll have to get very smart.”

But getting smart does not mean surrendering judgment to the machine. It means strengthening the human capabilities that machines cannot replicate.

Lynch often summarizes those capabilities through five traits: curiosity, critical thinking, creativity, courage, and capability. Together they form the cognitive backbone of what he calls intellectual sovereignty—the discipline of keeping human judgment inside the loop.

“We need real leadership… we need adults in the room,” he says. “It’s your responsibility, your obligation, to use it for a greater good. Don’t let your own knowledge and judgment get out of the loop.”

A Reallocation of Scarcity

If the first era of the knowledge economy rewarded people who possessed information, the emerging era rewards those who can interpret it.

Artificial intelligence is extraordinarily good at generating answers. What it cannot do is assume responsibility for what those answers mean.

That responsibility still belongs to the humans directing the system.

And in a world where intelligence is increasingly abundant, the rarest resource may turn out to be something much older: disciplined judgment.

Generated by Dan Forbush from a Civic Conversation in the Smartacus Story Accelerator.